A higher level of autonomy in unmanned aerial vehicles (UAVs) expands their potential for deployment across various real-world applications. This autonomy relies heavily on simultaneous localization and mapping (SLAM) and the capability to generate safe and precise trajectories toward a target.

VI-SLAM systems are widely used in UAVs due to their high accuracy, real-time performance, and autonomy, especially in GPS-denied environments such as indoor spaces or obstructed areas [1–5]. Additionally, for quadrotors with limited payload capacity and battery life, cameras serve as ideal onboard sensors for navigation. However, a key drawback of VI-SLAM is its rapid decline in accuracy when encountering texture-less regions. A common approach to improving accuracy involves keeping specific features or landmarks within the field of view (FOV) [6–8].

Nowadays, the advancements in artificial intelligence (AI), particularly deep learning applied to semantic segmentation [9] and object detection [10], have achieved high accuracy and performance. These techniques enable to label the regions with different characteristics semantically. Semantic information is often incorporated as a term or constraint in optimization frameworks to help avoid textureless or problematic regions, such as lakes and oceans, which can cause significant drift or failures in pose estimation [11,12]. Additionally, it helps prioritize high-textured regions, thereby improving the quality of pose estimation [13–19]. Moreover, semantics have also been employed in the multi-robot planning problem [20].

Ensuring safe arrival at the destination also requires effective obstacle avoidance. However, many existing studies assume the environment is static during collision checking. This limits their deployment in real-world scenarios. Because the real world is exactly the cluttered and dynamic environment.

Common collision-checking methods involve decomposing free space into convex regions such as sequences of axis-aligned cubes [21], convex regions from seeding [22, 23], or creating safe flight corridors (SFC) by inflating pre-existing trajectories (often the global trajectory) [24–28]. Collision-checking can be performed using either discretizing the trajectory into points or outer polyhedral representations. While discretized points are computationally intensive and do not guarantee collision-free paths between sampled points, increasing the number of samples to improve accuracy further adds to the computational burden [29–32].

To reduce the burden of computation, outer representation techniques enclose the trajectory within a polyhedron. If this polyhedron remains inside the free space, the entire trajectory is considered collision-free. For example, in polynomial trajectory optimization [33, 34], it is verified whether the outer polyhedral representation of each trajectory segment is contained within the free space.

A common approach to obtaining this polyhedral representation is by using the convex hull of the control points from the Bernstein or B-Spline basis [35–37]. However, in cluttered and dynamic environments, the free space is significantly reduced. A more compact outer representation improves the likelihood of successful trajectory generation while reducing computational time.

Getting the idea from the prior publications [38–40], this work uses the MINVO basis [41] instead of the Bernstein or B-Spline basis. Depending on the polynomial degree n, MINVO [41] can yield a significantly smaller volume.

Decomposition is especially challenging in cluttered and dynamic environments. In dense environments, it is difficult to construct a tight representation of free space. In dynamic settings, an additional dimension of time makes the decomposition much more complicated, and sometimes it is infeasible. To eliminate the need for decomposition, this work imposes a constraint that verifies the existence of a separating plane between the UAV’s trajectory and obstacle trajectories. This plane constraint is incorporated into the optimization process [38, 40].

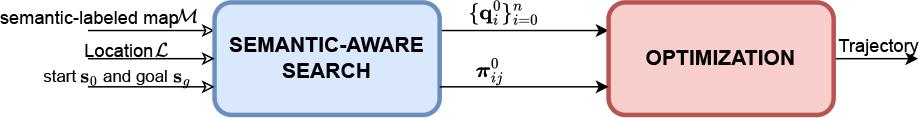

This study presents a novel workflow that guides UAVs to prioritize high-texture areas while avoiding texture-less and hazardous regions in dynamic environments. The proposed approach consists of two main stages: (i) Semantic-Aware Trajectory Initialization, called semantic-aware A* search, prioritizes safe and high-texture areas while avoiding textureless and hazardous regions. The output of this step serves as the initial guess for the second stage. (ii) Dynamic-Aware Optimization accounts for environmental dynamics by combining: (a) Eliminating free space decomposition and replacing it with a separating plane constraint. (b) Utilizing the MINVO basis. At the same time, the trajectory is also energy-optimal and satisfies dynamic constraints.

The UAV is modeled by the geometric shape and state at t. Its shape is a set of vertices in 3D space 𝓥U = [V0, V1,…] ⊂ ℝ3. And the state vector

The environment in which the UAV operates is a cluttered and dynamic environment. It is modeled by a metric-semantic map ℳ. It consists of unknown regions ℳunknown and known regions ℳknown. These known regions contain static obstacles 𝒪static, dynamic obstacles 𝒪dyn and the regions which semantically-labelled as texture-high ℳatt or hazardous/texture-less ℳrep. So ℳ = ℳunknown ∪ 𝒪dyn ∪ 𝒪static ∪ ℳatt ∪ ℳrep.

At this time, the problem is how to generate a feasible trajectory that guides the UAV from the initial state s0 to the goal state sg safely and accurately within the environment ℳ, while ensuring the minimization of control energy by leveraging the semantic information available in that semantic map.

To solve this problem, several key subproblems need to be addressed as follows:

- -

subproblem 1: Defining the trajectory.

- -

subproblem 2: HOW TO check collision in cluttered and dynamic environment even during trajectory generation. Details are presented in section 3 below.

- -

subproblem 3: HOW TO leverage semantic information to improve the process of trajectory generation for a certain purpose, more detailed in section 4.

- -

subproblem 4: Formulating and solving the programming optimization. It is described in more detail in section 5.

We use the method of polynomial trajectory planning [28, 33] with clamped uniform B-Splines. So, the UAV’s trajectory

The problem of UAV trajectory planning in a cluttered and dynamic environment. The trajectory is generated portion by portion to ensure real-time performance and feasibility

In this paper, we use the cubic splines (i.e., p = 3). This balances the dynamic feasibility of a UAV and computational efficiency [40]. So, the input control u(t) is jerk j(t) and itis constant at the same interval j(j) = const.

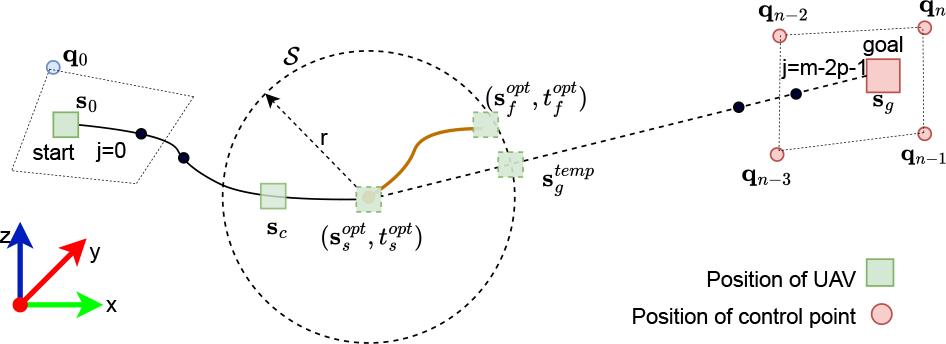

To ensure the real-time performance and feasibility, the consuming time of trajectory generation needs to be limited within a time interval of δ(t). This is achieved by generating only the portion of the trajectory (illustrated by Fig. 2, it is the golden-brown segment) that lies within a sphere 𝒮 with the radius r. During re-planning, r remains fixed. The trajectory generation starts at a time when UAV is staying at sc and at the moment before the time of completing the execution of the previous portion of the trajectory by δ(t) (illustrated in Fig. 2).

Thus, at this point, the starting point is the moment when the execution of the previous portion of the trajectory is completed

As described in section 1, to alleviate the burden of computing, All of the trajectories are represented by outer polyhedrals. And then checking whether or not the intersection of them for collision.

The bounding polyhedron (or outer representation) of UAV’s trajectory, which is defined in section 2, can be generated from its control points. They are indexed using the symbol l. The number of control points for each segment is one more than the degree of the B-spline, p + 1. However, we use MINVO because, as demonstrated in [41], it provides a much tighter representation. In detail, with degree n = 3 shows that, the volume reduces 2.36 and 254.9 times smaller than the ones obtained by the Bernstein and B-Spline bases, respectively. When n = 7, these ratios increase to 902.7 and 2.997.1021, respectively.

Thus, from each interval of the B-spline, the control B-spline points

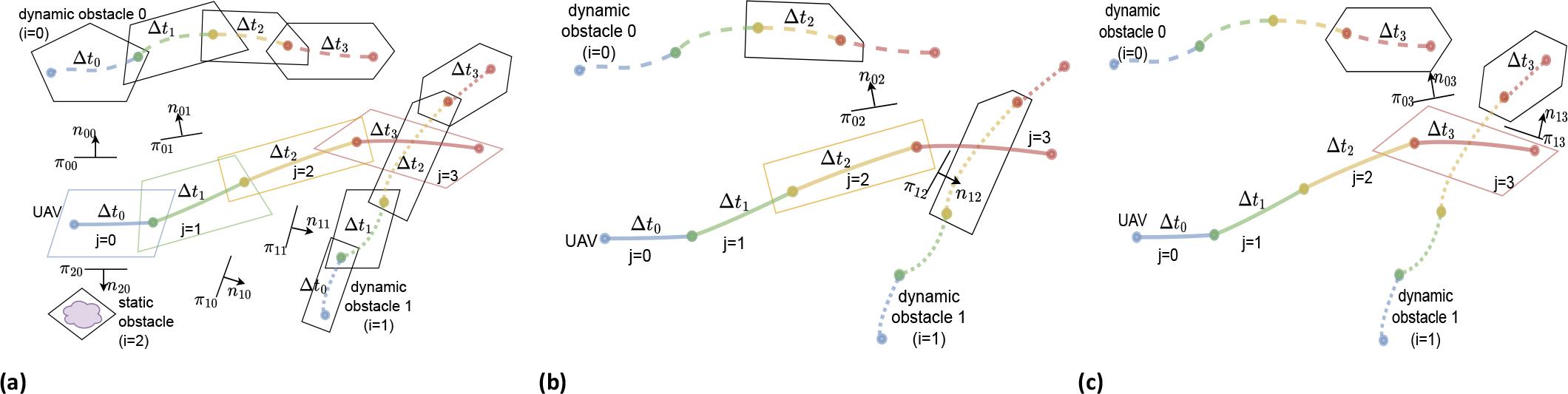

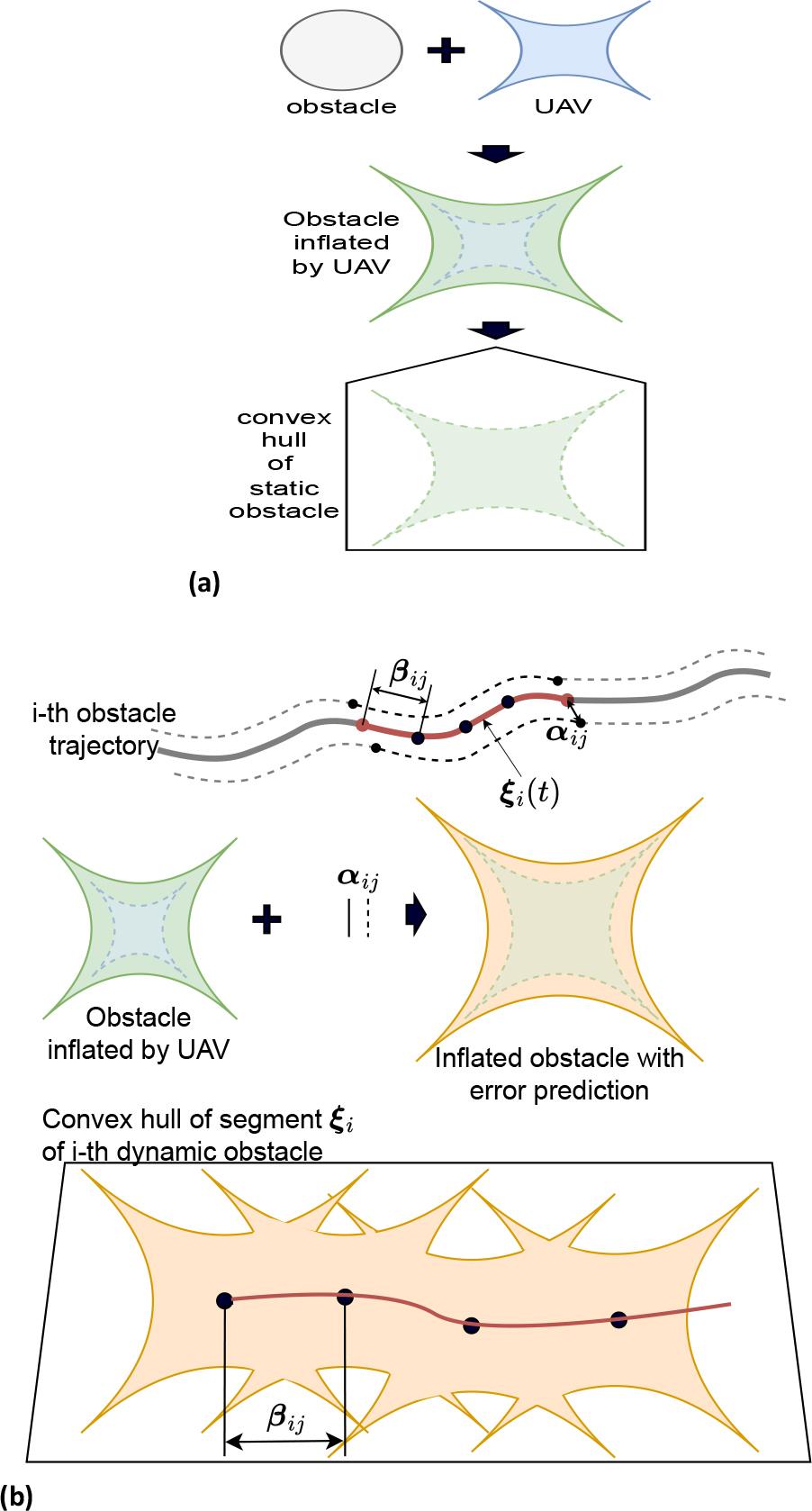

In the environment, there are I obstacles including static and dynamic ones. The i-th obstacle is symbolled by i (i ∈ I). An obstacle is characterized by its trajectory ξi(t) : = [ξx(t), ξy(t), ξz(t)]T and dimension

For dynamic obstacles, its trajectory is predicted segment by segment with the prediction error αij (illustrated by Fig. 4b). Each segment of i-th obstacle’s trajectory, ξij(t), is corresponding to a j-th interval time Δtj of the UAV’s trajectory. In this case, the convex hull is calculated as follows: First, it is inflated by the UAV’s size, similar to a static obstacle. Next, it is expanded with the prediction error αij. Then, it is slid along its predicted trajectory with a sampling time of βij. The entire occupied space of the obstacle,

The problem now is how to predict the obstacle’s motion trajectory. In the scope of this paper, the trajectory prediction function is assumed to be precomputed. Some particular obstacle’s trajectories are used for simulation, with details provided in section 6.

In subsection 3.2, the convex hull of the obstacles has already accounted for the UAV’s size, so the UAV is now treated as a point of mass. This means that the UAV and the obstacle do not collide if the MINVO convex hull

- –

nij is the normal vector defining the separating hyperplane.

- –

dij is a bias term shifting the hyperplane.

- –

𝓒ij is the convex region representing the obstacle.

- –

{\cal Q}_j^{MV} - –

I is the total number of obstacles.

- –

J is the set of UAV trajectory intervals.

In other words, they do not collide if there exists a separating hyperplane πij (characterized by the normal vector nij and bias dij) between their convex hulls, described by Eq.1. The first inequality of Eq.1 ensures that all points c in the obstacle set 𝓒ij lie on one side of the plane. The second one ensures that all points q in the UAV’s trajectory set

An illustration of an outer polyhedral representation and collision-checking that includes static and dynamic obstacles, as well as UAV is shown in Fig. 3. Fig. 3a illustrates all of the convex hulls and collision checking at the first and second intervals. Fig. 3b and 3c illustrate the convex hull and collision checking at the third and fourth intervals, respectively. This problem is solved using GLPK [43] or Gurobi [44].

Outer representation of trajectories of UAV, dynamic obstacles (for instance, obstacle 0 and 1), static obstacle (for example, obstacle i = 2) and Collision-checking by separating planes πij: (a) Collision-checking at the 1st and 2nd interval of UAV trajectory. (b) Collision-checking at the 3rd interval of UAV trajectory.(c) Collision-checking at the 4th interval of UAV trajectory

This algorithm is inspired by the original A* [45] for path-finding based on not only traditional costs but also the semantic information about the environment. In particular, its resulting path is to prioritize the rich-informative regions while avoiding hazardous or low-informative areas. So itis called as semantic-aware A*. This is achieved by introducing additional cost values into the total cost function f. Each MINVO control point serves as a node in the search. All open nodes are maintained in a priority queue openSet, where elements are ordered in ascending order off.

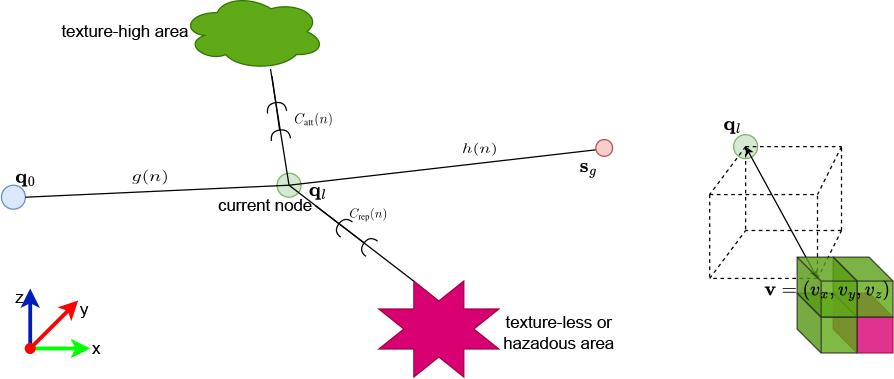

With semantic-labeled map ℳ, the regions with high information tend to attract the UAV, modeled by Catt. Whereas the hazardous or low-informative regions tend to repel the UAV, modeled by Crep (described in Fig. 5).

Outer representation of the obstacles: (a) For static obstacle. (b) For dynamic obstacle

The total cost of semantic-aware A* includes traditional costs, along with repulsive and attractive costs arising from texture-less/hazardous regions (vivid pinkish-magenta color) and high-texture areas (fresh green color), respectively. Along with HOW TO calculate the Euclidean distance in 3D space used as primitive for calculating the costs

So, at this time, the total cost function f(.) includes four terms, where g(.) is the sum of the distances (between successive control points) from q0 to the current node ql (cost-to-come), h(.) is the distance from the current node ql to the goal sg (heuristics of the cost-to-go), Crep(.) is for repelling UAV away from the low-texture regions, while conversely, Catt(.) is for attracting towards informative areas, as modeled in Eq. 2.

The position of each voxel in the map ℳ is

However, their influence on the total cost (according to Eq. 2) is opposite. By increasing the total cost, Catt prioritizes regions with smaller distances. In contrast, Crep decreases the total cost, thereby prioritizing regions with larger distances, meaning it tends to push the trajectory away from those regions. They are calculated by Eq. 3, where the sign “_” indicates att or rep.

The weights λg, λh, λatt λrep ∈ ℝ+ in Eq. 2 determine the influence of each component on the total cost f(·) If a weight is greater than 1, the corresponding component has a stronger influence (for example, if λatt > 1, the trajectory is more strongly guided toward the attractive region). On the other hand, when the weight is less than 1 (0 < λ < 1), the corresponding component has a weaker influence compared to the others. If the weight equals 1, it has a balanced influence according to its original value.

The algorithm is represented as a pseudo-code in Alg. 1. Firstly, control points q0, q1, q2 is determined from the initial state

1: (q0, q1, q2) ←

2: openSet ← q2

3: gCost, fCost ← ∞

4: gCost[q0] ← 0

5: Calculating fCost[q0] by Eq. 2 and 3

6: while (openSet is not empty) or timeout do

7: ql ← First item of openSet

8: if

9:

10: qn-1 ← qn-2

11: qn ← qn-1

12: return

13: end if

14: openSet.

15: [isCols,πij] ←

16: if

17: continue

18: end if

19: for Δv ∈

20:

21: gCosttemp ← gCost[ql] +

22: if gCosttemp < gCost[ql+1] then

23: Seq[ql+1] ← qc

24: gCost[ql + 1] ← gCosttemp

25: CalculatingfCost[ql+1] by Eq. 2 and 3

26: if ||ql+1 — qk||∞ > δ then

27: openSet ← ql+1

28: end if

29: end if

30: end for

31: end while

32: return [

The loop of semantic-aware A* search is run until openSet queue is empty or out of time: The first item, which is with f value is lowest, is popped (line 7) and remove it (line 14) if it simultaneously does not touch the target (line 8) and satisfies some conditions (line 16). The search process is considered complete if ql is within a predefined distance ϵ from the intermediate goal

For ql node to be accepted, it must satisfy several conditions (line 16): it must be inside the sphere

The result is a hyperplane added to πij and a binary variable isCols indicating whether or not a collision occurs (line 15).

Next, the best neighbor of ql is expanded and added to openSet queue: For each value of Δv, which is uniform-sampled ensuring the limits vmax and amax. The neighbor is computed (line 20) using a time step of Δt/p where

Based on the initial trajectory (converted from

This problem subjects to some constraints:

- (i)

The starting point of this inverval is the endpoint of previous one. That is,

{\bf{s}}(t_s^{opt}) = {\bf{s}}_s^{opt} - (ii)

When sg is inside the sphere 𝒮, it means that the last segment of trajectory is optimized (that is, j = m − 2p − 1 or

{\bf{s}}(t_g^{opt}) = {{\bf{s}}_g} {\bf{v}}(t_g^{opt}) = {\bf{0}} {\bf{a}}(t_g^{opt}) = {\bf{0}} - (iii)

Ensuring the safety (collision-avoiding) in a dynamic environment, detailed in subsection 3.3.

- (iv)

Guaranteeing that the generated segment of trajectory remains inside sphere 𝒮, detailed in section 2.

- (v)

The trajectory must comply with kinematic constraints. Specifically, velocity and acceleration must not exceed UAV’s physical limits vmax and amax, respectively. Because the trajectory is represented by B-Splines, which is a continuous function of time. So directly imposing these constraints (vmax, amax) at every single point in time along this continuous trajectory would lead to an infinite number of constraints, making the optimization problem computationally intractable.

Moreover, the control points q parameterize the entire trajectory segment. Therefore, by placing constraints on these control points (velocity v and acceleration a), we can indirectly influence and bound the physical velocity and acceleration throughout the interval. The bound of the velocity vmax and acceleration amax of control points are inferred the physical ones, respectively. This problem (Eq. 4) is solved by Gurobi [44].

In the experiments, we use the configuration of the system as following:

- -

Hardware: 16 cores Intel Core i7-10875H @ 2.30GHz; GPU Nvidia TU117GLM [Quadro T1000 Mobile]; RAM memory of 32 GB.

- -

Software: Ubuntu 20.04 LTS; ROS noetic [46] serves as the middleware framework for communication between the planner, simulator, and visualization/logging tools. It provides standardized message passing and modular integration of different software components.

For all planning and collision checking, the UAV is modeled as a sphere of radius rUAV = 0.1 (m) which upper-bounds the vehicle body and rotor sweep. While rotor disks are omitted in the figures for visual clarity, this inflated model guarantees safe clearance in all experiments. To guarantee real-time performance and feasibility, the planning horizon is limited to a sphere of radius r = 4.0 m, as detailed in Section 2.

Moreover, to focus on the trajectory planning algorithms, it is assumed that the UAV can perfectly track the trajectories generated by the planner.

This section evaluates the semantic-aware A* algorithm (presented in section 4) in two aspects: the influence of semantic information and the avoidance of dynamic obstacles with the following configuration: λg = 1.0, λh = 1.0, runtime = 0.1 second, degree of B-spline = 3 (cubic b-spline), number of segments = 6 and one trefoil-knot-based dynamic obstacle.

The trajectory of the dynamic obstacle, ξ(t), is modeled as a trefoil knot [47, 48]. Although the trefoil knot does not reflect realistic obstacle motion, it provides several advantages:

- (i)

Challenging yet structured – its 3D, non-trivial trajectory is more demanding than linear or circular paths, making it a strong test for collision avoidance.

- (ii)

Repeatable – its mathematical definition ensures identical, reproducible runs.

- (iii)

Controlled complexity – the trajectory is well understood, enabling systematic evaluation of algorithm performance.

- (iv)

Visually distinct – its clear shape facilitates observation and validation in simulations.

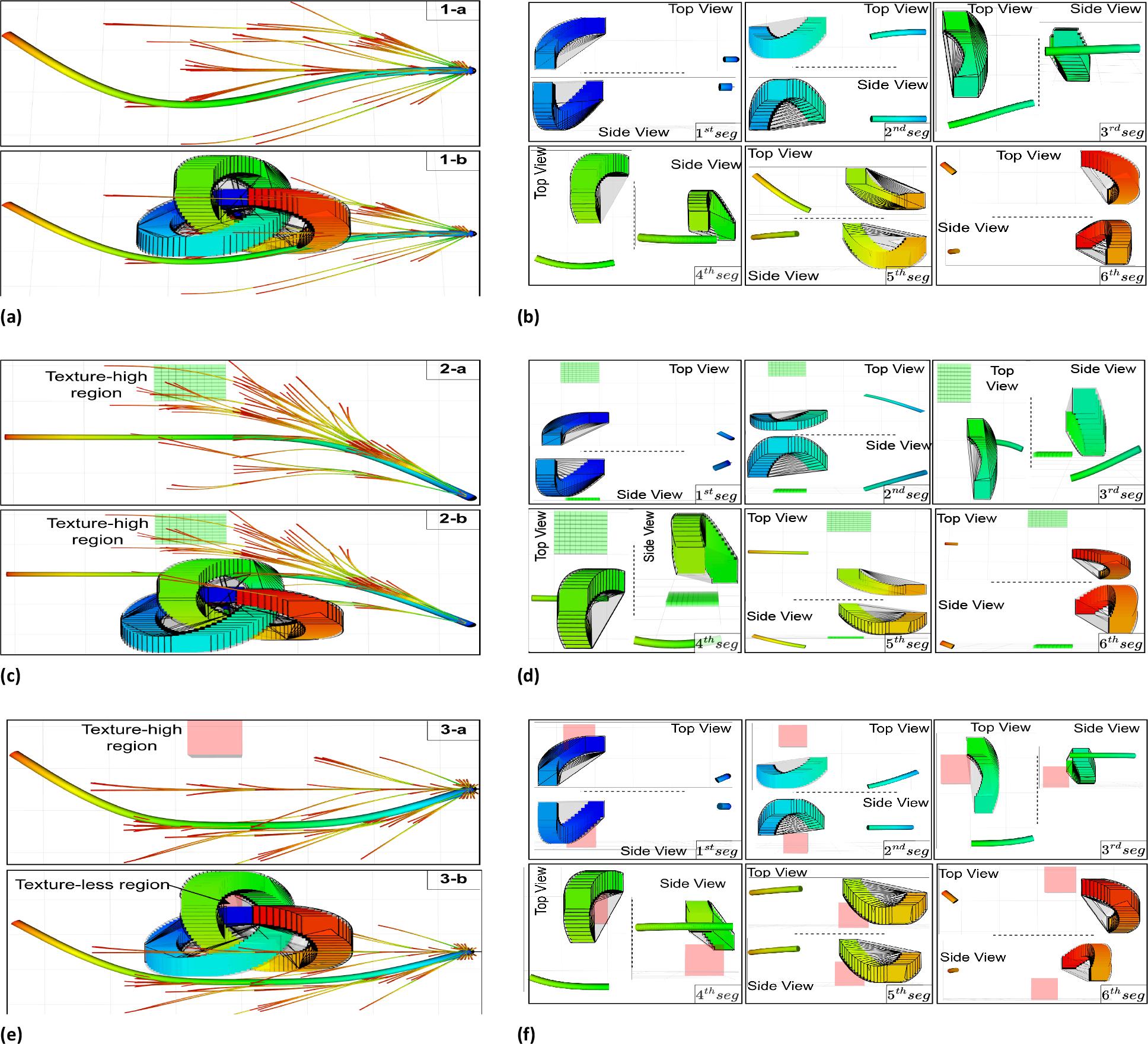

The first case considers no influence of semantic information with λatt = 0, λrep = 0. In this case, the search process tends toward the goal while avoiding obstacles (Fig. 6a). In the second case, testing the algorithm in an environment containing a texture-high region (the green area in Fig. 6c and 6d) with λatt = 2.0, λrep = 0. The experimental results in Fig. 6c show that the search process tends to shift toward the texture-high region compared to the first case. The third case examines the algorithm in an environment with a texture-less or unsafe region (the red area in Fig. 6e and 6f) with λatt = 0,λrep = 0.5. The results indicate that the search process tends to avoid this region.

The experimental results of semantic-aware A* with one dynamic obstacle in three cases (Without semantics, Considering the influence of the attractive region and Considering the influence of the repulsive region): (a), (c), and (e) show the complete trajectories, while (b), (d), and (f) display the corresponding individual trajectory segments (1-6) for each case

In all of these cases, the results in (Fig. 6b, 6d and 6f) show that dynamic obstacle avoidance is fully ensured at each segment (from 1st to 6th one). Because the obstacles are still considered to be in motion (no temporarily-static) during the collisionchecking process. The experimental results (Figure 6) demonstrate that the proposed semantic-aware A* algorithm behaves as expected, showing a tendency to move toward regions rich in information while avoiding information-poor areas.

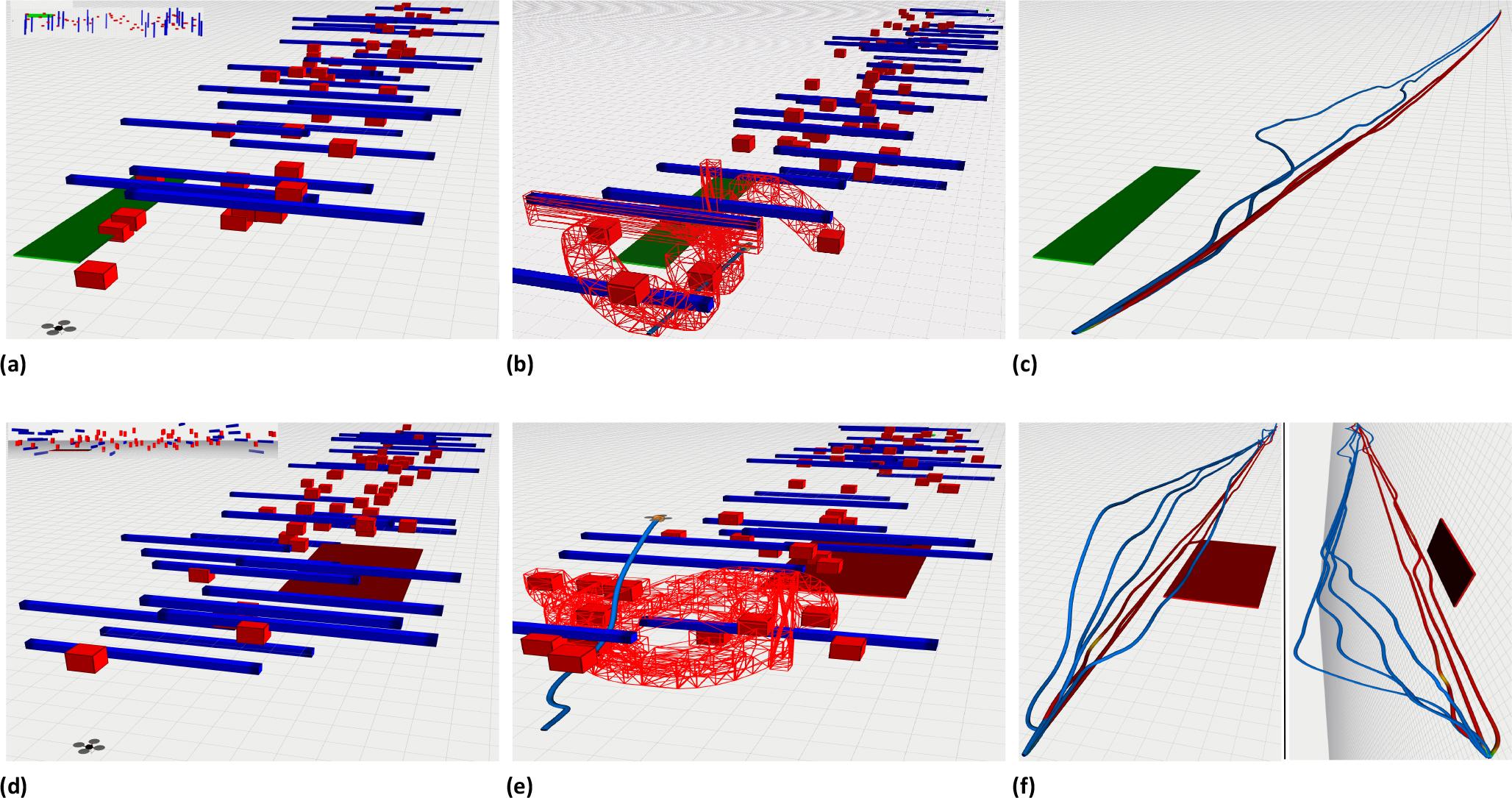

In this section, we evaluate the system’s capability to simultaneously leverage the semantic information – both to avoid and/or to prioritize traversing certain regions – while operating in a dynamic environment, by comparing it with the MADER system [38], which does not utilize semantic information. The evaluation is conducted in a dynamic environment measuring 70 x 4.0 x 4.0 meters (Fig. 7a and 7d), where 65% of the obstacles are dynamic – represented by red cubes, each sized 0.8 x 0.8 x 0.8 (m) – while the remaining 35% are static obstacles, depicted as blue rectangular boxes, each measuring 0.4 x 0.4 x 8.0 (m).

Experiments in dynamic corridor environment: (a) The environment includes dynamic and static obstacles (the red cube and the blue rectangular box, respectively), as well as a rich-information region (the green rectangular box); (b) The quadrotor flies in a dynamic environment, with outer polyhedra (red boxes) surrounding obstacles; (c) The dark blue and dark red trajectories come from our suggestion and MADER [38], respectively; (d-f) The corresponding setup, flight, and trajectory results for a scenario including a low-texture or hazardous region (red rectangular box)

Two simulation scenarios are conducted. The first features a dynamic environment containing a high-texture region, visually indicated by a green rectangle (Fig. 7a, 7b and 7c). The second includes a poortexture or hazardous region, represented by a red rectangle. Both scenarios share the same UAV dynamic constraints, with a maximum velocity of vmax = [6.0 6.0 6.0] m/s and a maximum acceleration of amax = [20 20 10] m/s2, corresponding to the velocity and acceleration limits, respectively. Additionally, in the first scenario, the goal position is set to (75.0, −10.0, 1.0) m, whereas in the second scenario, it is set to (75.0, −1.0, 1.0) m. The runtime of the MILP phase is bounded between 0.05 and 0.35 second.

In the first scenario, simulations are conducted by MADER [38] and our suggession (λatt = 2.0), followed by a comparison of the resulting trajectories. The simulation results show that the UAV is capable of navigating safely in a dynamic environment (Fig. 7b), while also exhibiting a tendency to pass through the high-texture region (the dark blue trajectory in Fig. 7c).

Similarly, in the second scenario, considering the low-texture or hazardous area corresponds to λrep = 2.0. The resulting trajectory shows that the UAV tends to avoid these regions (the dark blue trajectory in Fig. 7f), while still successfully reaching the goal within the dynamic environment (Fig. 7e).

This work introduces a complete workflow for semantic-aware trajectory planning that enables UAVs to navigate autonomously in cluttered, dynamic environments while simultaneously exploiting semantic information to prioritize or avoid specific regions depending on task objectives. Compared to non-semantic planners (such as MADER), which do not leverage semantics, our approach achieves more efficient and context-aware navigation, with trajectories that tend to avoid hazardous zones and approach information-rich regions.

The proposed approach combines a semantic-aware A* initialization, which biases trajectories toward safe and informative regions, with a dynamic-aware optimization that refines the path using separating plane constraints and the MINVO basis while ensuring energy efficiency and dynamic feasibility.

Nevertheless, several limitations remain. The optimization currently considers only the UAV’s position and not its orientation, which may constrain applicability in tasks requiring viewpoint control. Our simulations also do not yet report quantitative state-estimation errors under semantic versus non-semantic settings, and dynamic obstacles are assumed to follow known trajectories rather than stochastic, uncertain motions.

Concrete directions for future work include extending the formulation to explicitly handle UAV orientation and perception-aware objectives, incorporating online estimation of dynamic obstacle motion with uncertainty, and validating the approach in hardware experiments with real UAVs. Another promising direction is to adapt or learn the semantic weights (λatt, λrep) online using reinforcement or imitation learning, thereby tailoring behavior to specific missions while maintaining robustness.

Overall, this work represents a first step toward bridging high-level semantic understanding with low-level dynamic feasibility, paving the way for safer and more intelligent UAV autonomy in complex real-world environments.