Fig. 1.

Fig. 2.

Fig. 3.

Fig. 4.

Fig. 5.

Fig. 6.

Fig. 7.

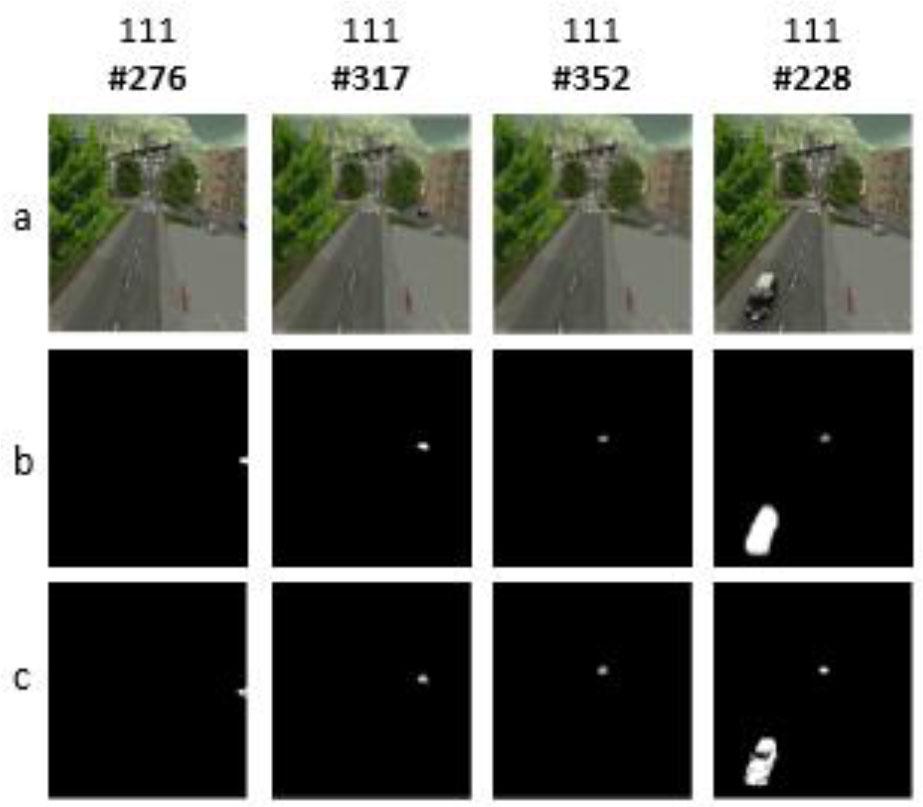

Fig. 8.

Fig. 9.

Fig. 10.

Fig. 11.

Fig. 12.

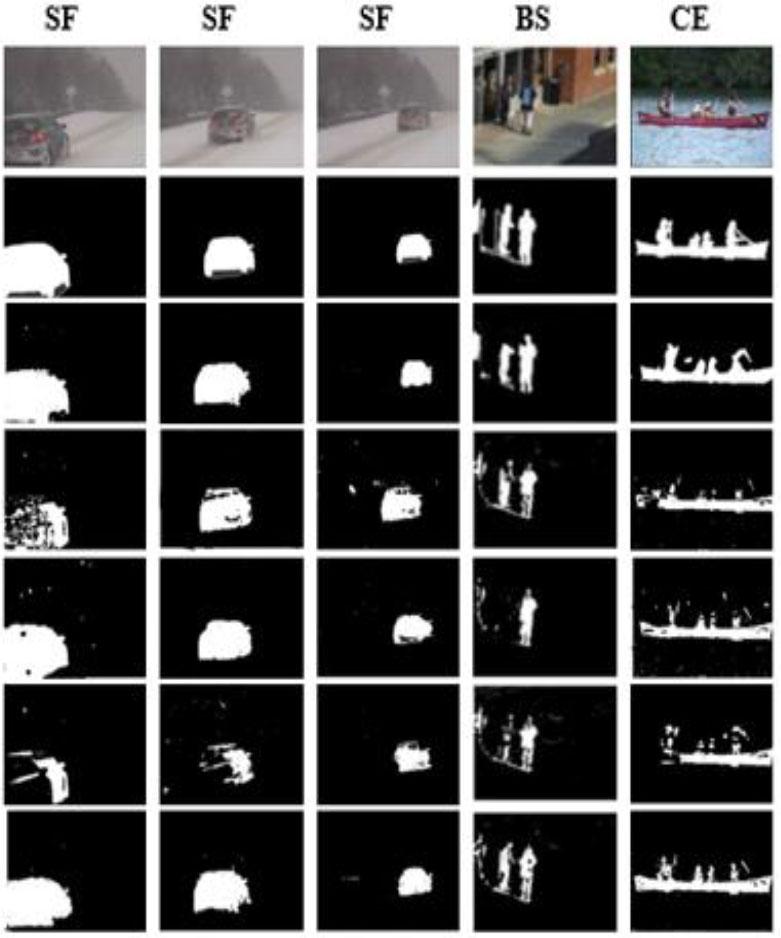

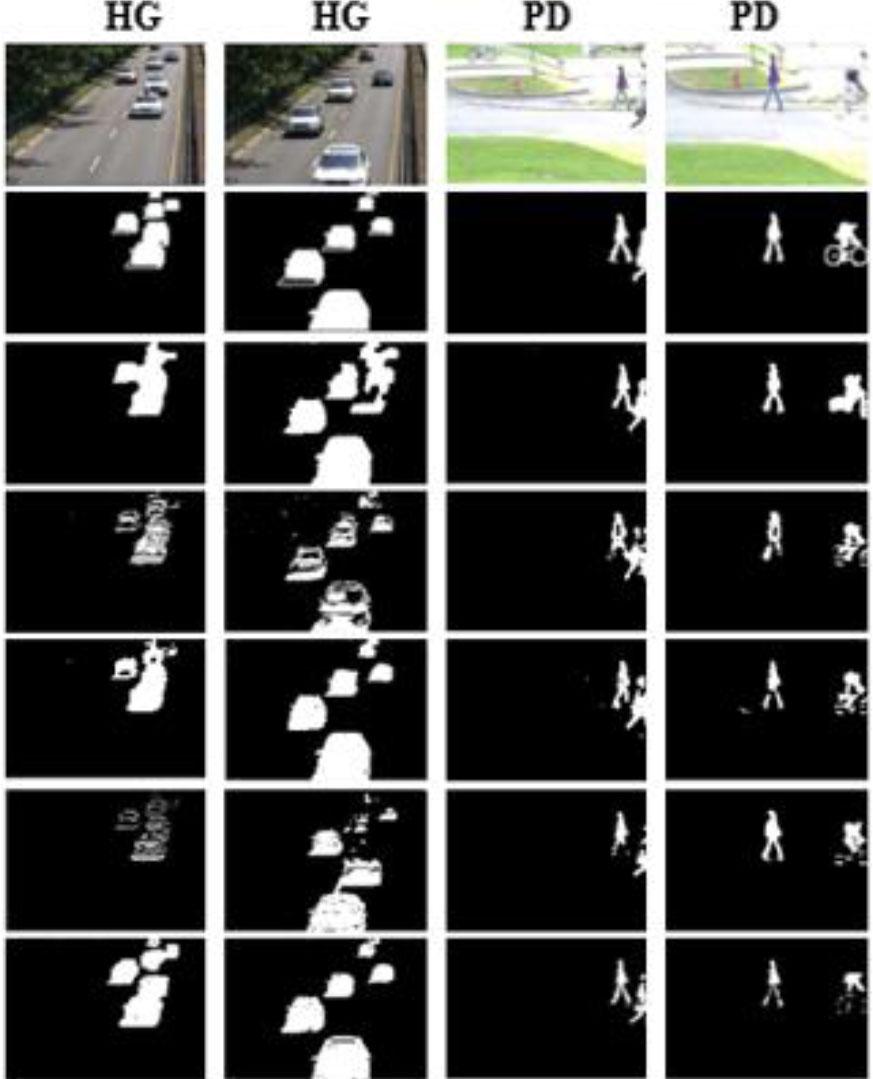

Comparative assessment of F-measure across four categories using four methods on the LASIESTA dataset_ Each row presents the results for a specific method, while each column displays the average scores for each category

| Methods | F-M | ||||

|---|---|---|---|---|---|

| I_SI | I_CA | I_BS | O_SU | Overall | |

| GMM [31] | 0.8328 | 0.8272 | 0.36941 | 0.7240 | 0.6880 |

| GMM_ | 0.9054 | 08320 | 0.5330 | 0.7100 | 0.7450 |

| Zivk [26] | |||||

| Cuevas [32] | 0.8805 | 0.8440 | 0.6809 | 0.8568 | 0.8155 |

| Our approach | 0.9089 | 0.8415 | 0.7021 | 0.8938 | 0.8390 |

Mean F-measure and standard deviations for different methods

| Methods | Mean F-M (µ) | Standard Deviation (σ) |

|---|---|---|

| MOD-BFDO | 0.8535 | 0.0920 |

| SuBSENSE [24] | 0.8257 | 0.1013 |

| DeepBS [27] | 0.8490 | 0.1296 |

| SC_SOBS [25] | 0.7158 | 0.1306 |

| GMM_Zivk [26] | 0.6696 | 0.1232 |

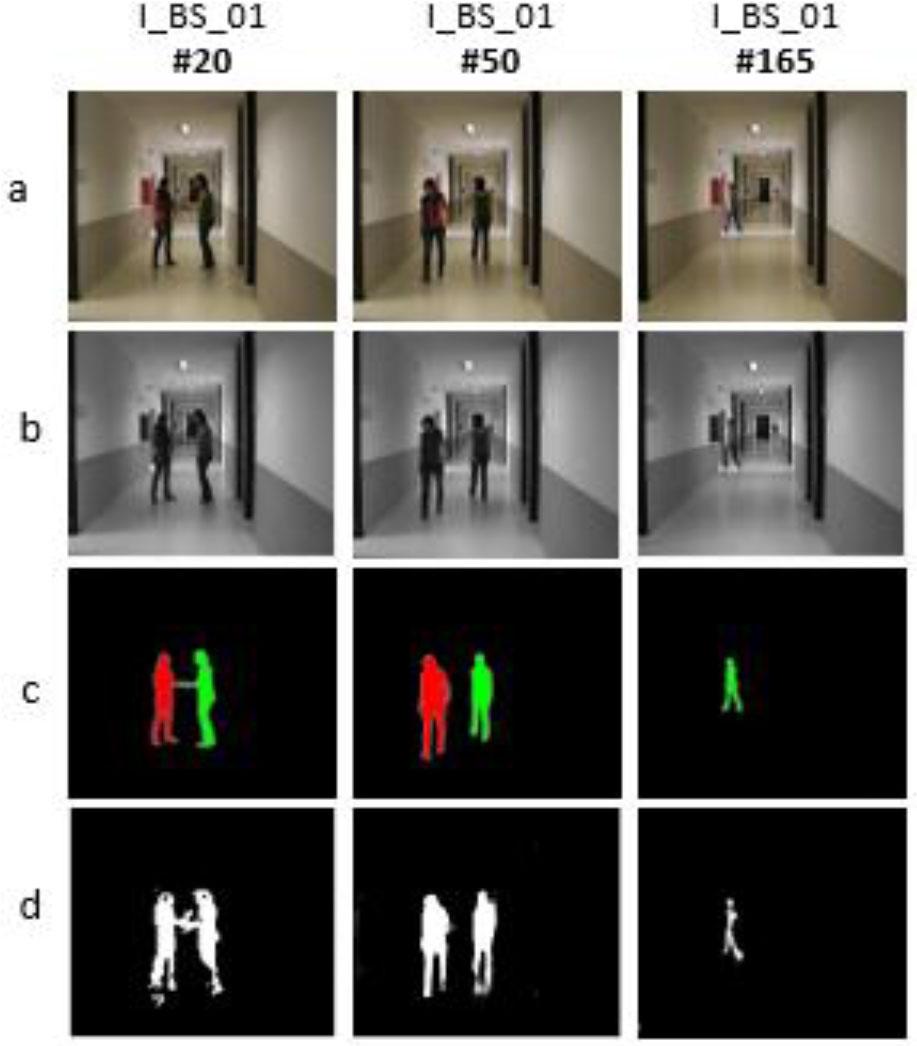

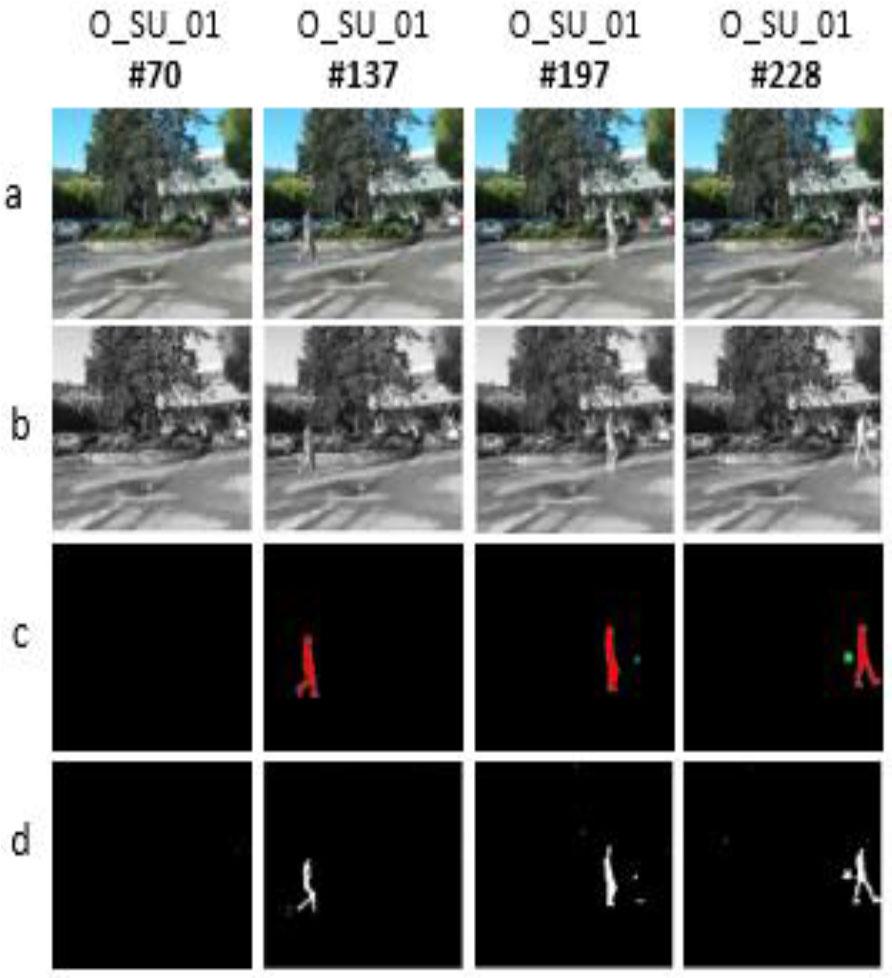

Results obtained by the proposed algorithm on the LASIESTA dataset

| Category | RE | PWC | F-M | PR |

|---|---|---|---|---|

| I_SI | 0.8969 | 0.5501 | 0.9089 | 0.9219 |

| I_CA | 0.7930 | 1.2835 | 0.8415 | 0.9250 |

| I_BS | 0.7015 | 0.4164 | 0.7120 | 0.7457 |

| O_SU | 0.8868 | 0.1917 | 0.8938 | 0.9038 |

| Average | 0.8195 | 0.6104 | 0.8390 | 0.8741 |

Z-scores for MOD-BFDO vs other methods

| Comparison | z-Score |

|---|---|

| MOD-BFDO vs SuBSENSE | 0.498 |

| MOD-BFDO vs DeepBS | 0.069 |

| MOD-BFDO vs SC_SOBS | 2.11 |

| MOD-BFDO vs GMM_Zivk | 2.93 |

Evaluation of our method on the CDnet 2014

| Category | RE | SP | FPR | FNR | PWC | F-M | PR |

|---|---|---|---|---|---|---|---|

| Baseline | 0.9577 | 0.9911 | 0.0021 | 0.0423 | 0.3634 | 0.9409 | 0.9432 |

| Bad weather | 0.8950 | 0.9970 | 0.0004 | 0.1053 | 0.5212 | 0.8834 | 0.8723 |

| Dy. Backg | 0.8839 | 0.9989 | 0,0013 | 0,2332 | 0,6121 | 0.9051 | 0.9272 |

| Shadow | 0,8704 | 0,9917 | 0,0082 | 0,1295 | 1,6663 | 0.8785 | 0,8869 |

| Cam. Jitter | 0.8154 | 0,9945 | 0,0057 | 0,1864 | 1,2627 | 0.8332 | 0.8515 |

| Law. Fram | 0.7610 | 0.9934 | 0.0061 | 0.2492 | 0.9064 | 0.6800 | 0.6146 |

| Average | 0.8639 | 0.9944 | 0.0039 | 0.1576 | 0.7220 | 0.8535 | 0.8492 |

Comparison of Average Frames Per Second (FPS) Across Three Source Video Sequences

| Methods | Size of video | ||

|---|---|---|---|

| 320×240 | 352×288 | 720×480 | |

| SC_SOBS [25] | 9.8 | 8.7 | 3.4 |

| SuBSENSE [24] | 3.3 | 2.8 | 1.6 |

| GMM _Zivk [26] | 21.6 | 18.1 | 13.8 |

| MOD-BFDO | 5.5 | 4.7 | 3.2 |

Comparative assessment of F-measure in six categories using four methods_ Each row presents results specific to each method; each column displays the average scores in each category

| Methods | F-M | ||||||

|---|---|---|---|---|---|---|---|

| Baseline | Bad weather | Dy. Backg | Shadow | Cam. Jitter | Law Fram | Overall | |

| DeepBS [27] | 0.9580 | 0.8301 | 0.8761 | 0.9304 | 0.8990 | 0.6002 | 0.8490 |

| SC_SOBS [25] | 0.9333 | 0.6620 | 0.6686 | 0.7786 | 0.7051 | 0.5463 | 0.7158 |

| SuB-SENSE[24] | 0.9503 | 0.8619 | 0.8177 | 0.8646 | 0.8152 | 0.6445 | 0.8257 |

| GMM_Zivk [26] | 0.8382 | 0.7406 | 0.6328 | 0.7322 | 0.5670 | 0.5065 | 0.6696 |

| MOD-BFDO | 0.9409 | 0.8834 | 0.9051 | 0.8785 | 0.8332 | 0.6800 | 0.8535 |

A comparison between our method and some of the most important existing methods on CDnet 2014 dataset

| Methods | Overall | |||

|---|---|---|---|---|

| Avg. RE | Avg. PR | Avg. PCW | Avg. F-M | |

| DeepBS [27] | 0.8312 | 0.8712 | 0.6373 | 0. 8490 |

| SC_SOBS [25] | 0.8068 | 0.7141 | 2.1462 | 0.7158 |

| SuBSENSE [24] | 0.8615 | 0.8606 | 0.8116 | 0.8257 |

| GMM _Zivk [26] | 0.7155 | 0.6722 | 1.7052 | 0.6696 |

| MOD-BFDO | 0.8639 | 0.8492 | 0.72202 | 0.8535 |